Overview

Once a niche research interest, artificial intelligence and machine learning (AI/ML) technologies have quickly become commonplace with a growing influence over our lives. In turn, it has become increasingly important to consider the broader social impact of AI/ML research, including how to best mitigate risks related to malicious use, accidents, and other unintended consequences related to its dissemination.

After gathering insights from individuals from across the AI/ML research community, the Publication Norms for Responsible AI Workstream has released a white paper providing six recommendations for publishing novel research in a way that maximizes benefits while mitigating potential harms. Reflecting the a community-wide effort needed, these recommendations are directed to three key groups in the AI research ecosystem: individual researchers, research leadership, and conferences and journals.

To learn more about how you or your organization can use these recommendations to advance responsible publication practices in our field, please email scai@partnershiponai.org.

Research

Updates

Key questions and challenges

Based on conversations with Partner organizations and domain experts, PAI has identified a number of high priority questions and challenges which we believe are good starting points for tackling the issue of publication norms for responsible AI.

Machine Learning is not the first community to face difficult questions about the responsible publication of potentially dangerous technology and research. We can certainly learn from these practices, but even within these fields the problem is not entirely solved. We must also consider how the field of AI/ML is similar to and different from these fields, in order to better understand which practices may be appropriate and where we may need to develop custom solutions.

The field of computer security is routinely confronted with the question of when and how researchers should release information that is potentially harmful to other parties. The typical case for such dilemmas is the discovery of a bug in a particular piece of software that causes it to be vulnerable to hacking. In those cases, there is a norm of ‘coordinated disclosure,’ which usually involves the discoverer of the vulnerability informing the company or community which makes the software before making the knowledge public.

The main difficulty for the AI/ML community in adopting this practice is that it is often unclear who should be notified about the harmful information. In computer security, there is usually a clear person or organization (for example, Microsoft, Apple, or whoever maintains the software) responsible for fixing the vulnerability, whereas in the case of something like ‘ability to create realistic fake video’ it is unclear who it would be appropriate to notify, since the vulnerability is not a technological one but rather a feature of the human visual system. One possibility would be to notify other researchers who are working on detection methods for fake video, but since anyone can claim to be a researcher working on the problem, there would still be a problem of vetting these claims which may be resource intensive and could lead to accusations of gatekeeping. It is also hard to find out who the right people would be to mitigate potentially harmful technology without announcing the existence of the harmful technology, which in itself might be a dangerous thing to make known.

One of the most salient examples of a field that has been wrestling with responsible publication practices is that of synthetic biology. In 2019, scientists called for a moratorium on heritable gene-editing techniques after ethical concerns were sparked by the announcement of the birth of the first gene-edited babies in China the year before. In 2012, the journal Nature considered redacting parts of a paper on the mammalian transmissibility of avian flu due to biosecurity concerns. In 2018, a journal controversially published a paper describing the synthesization of Horsepox, despite significant concerns from microbiologists that the information could be used to reintroduce Smallpox to the world, illustrating that the question of responsible publication in this field is yet to be resolved.

Fields of research that deal with biohazards have developed biosafety and biosecurity techniques to mitigate risks. One example is the CDC’s biosafety levels, which categorize laboratory work based on risk and define corresponding containment precautions. It is conceivable that the AI/ML field could adopt a similar categorization process based on the risks and impacts of AI/ML research, and recommend different publication strategies for different lev

Like AI/ML, nuclear engineering is a field that has immense positive applications but also carries significant risks of malicious use and accidents. Perhaps the most extreme example of secrecy in research is the Manhattan Project, the US Government’s secret research program to develop nuclear weapons. Research sites were heavily monitored, and only a very limited number of carefully vetted people had access to the research. In his 1945 speech, Oppenheimer (director of the Los Alamos Laboratory that developed the atomic bomb) analyses the difficult trade-offs between caution and openness, and between the benefits of nuclear energy and the devastation of nuclear weapons.

The term “born secret” is often associated with information policy regarding nuclear weapons, referring to information that is classified by default due to its importance to national security, and only potentially declassified after a formal evaluation. The policy is not without controversy, with some speculating that such blanket restriction may be unconstitutional.

In the wake of the 2011 Fukushima nuclear disaster, some have questioned whether the field should in fact be moving away from secrecy and towards transparency. The many benefits of transparency and openness are why approaches that simply restrict publication are undesirable, and why a more nuanced approach, developed thoughtfully with community input is so important.

It is a common national security practice around the world to place dissemination or release controls on sensitive information, for a variety of reasons. In the US, the complex decisions surrounding the disclosure and release of classified information can only be made by government officials with the training and legal authority to do so. Decisions aim to strike a balance between releasing the information in support of national security and foreign policy objectives and/or the public right or need to know, and protecting national security and foreign policy objectives by not releasing the information. Disclosure and release of sensitive information can range from release in whole, to release in part, to redact some information, to not releasing at all. This concept of ‘partial release’ is an example of a strategy we could explore as part of this project to determine how well it might work in the context of AI/ML.

There have also been efforts to consider the general question of when is it appropriate to make potentially hazardous information public, and how a lack of effective coordination can make dangerous situations more likely. In the context of Computer Science, some have advocated for norm changes in peer review to require a discussion of the potential negative impacts of the research as well as positive ones. This is an example of the kind of norm change we hope to explore as part of this project.

We are keen to further explore the similarities and differences between fields like these and AI/ML, and to better understand what solutions have been tried. We are also keen to learn from history; for example, when has secrecy caused problems, and are there examples of when limiting information flow has worked well?

Openness is a fundamental scientific value – responsible for many of humanity’s technological, social and cultural advances. One of the major successes of the AI/ML community is that is successfully switched to publishing in open access journals like arXiv, going against significant pressure to publish in traditional closed academic journals.

Without openness, we risk forming intellectual bubbles and falling prey to groupthink. Without peer review, it is harder for researchers to get feedback on their work and correct errors. Enabling others to reproduce experiments, promotes confidence that research findings are robust and helps aspiring researchers learn and make progress. A norm of openness helps ensure beneficial technology reaches the public instead of being used only for private benefit, and helps build the trust and information sharing necessary for scientific communities to thrive. For high-stakes research, openness can be particularly valuable because it enables further research into harm mitigation techniques, and facilitates public conversations about the potential harms and risks that may otherwise have been overlooked.

There are risks associated with changing the status quo regarding publication norms, especially if it could erode the many benefits provided by open science. Yet we must also acknowledge the need to push the boundaries of AI/ML responsibly, with an eye to the potentially large-scale social effects. Rather than seeing the conversation on publication norms in opposition to open science, we hope to fully include the open science advocates in our exploration. In most cases, it’s likely that the responsible thing to do it to publish the research as openly as possible, so that we can all enjoy the benefits. In cases where there may be risks associated with publication, it can be helpful to view openness as a spectrum, where different publication strategies can be employed to mitigate risks while maximizing openness.

It may not be possible for us all to agree on what the perfect level of openness is for any given piece of research, but we are highly sensitive to the trade-offs involved and welcome community input as we navigate this complex issue.

Researchers often write about the beneficial applications of their work when applying for grants, or in academic submissions. However, it is not currently as common a practice in AI/ML to also address the potential risks and harms of the work, as it is in fields like biomedicine. In the same way that some fields encourage researchers to write about the side effects or environmental implications of their work, should it become common practice in AI/ML to include a thorough discussion of risks and impacts of their work when applying for grants or submitting papers for publication? This idea has also been proposed for the computing research community in general, and NeurIPS recently announced its updated submission process which requires authors to consider the broader social impacts of their research, both positive and negative.

One potential approach to incentivizing a more substantial discussion of the risks and impact of research would be to host open online discussions where researchers can analyze the risks and impacts of each other’s work, similar to the way platforms like OpenReview enable peer-to-peer paper reviews and discussions. Contributors could get professional credit for insightful comments that identify overlooked risks.

An important question is whether publishing the potential harms of new research could in fact end up giving bad actors ideas for malicious use. To what extent are those motivated to cause harm bottlenecked by ideas vs resources? Is there a way to encourage discussion on risks in a more private way (for example, by asking researchers to submit a discussion on risks alongside any papers as a condition of acceptance, but not publishing the discussion itself)?

There is also the question of whether researchers are the right people to assess the risks and impact of their work – while they may be domain experts, researchers are rarely trained in risk assessment, nor do they necessarily have the social science knowledge required to accurately anticipate the social impacts of their work. Should responsibility for anticipating the risks and impact of work lie with the researcher, the organization, or with someone else? What tools do they need to do a good job?

Novel research can have complex second and third order effects that are often hard to anticipate, particularly for those without specialist historical, political, social, cultural, philosophical, and economic expertise. Researchers may not always have the breadth of expertise to accurately anticipate the risks and the impact of their work. So how can we help? What tools or services can we provide to help those who ask for guidance in navigating these complex issues?

One example is to provide a comprehensive guide for researchers to self-assess their work. Including the right questions could help them identify and mitigate risks in advance that they may not have otherwise noticed. We’ve provided a first draft of such a guide in the Resources section, and welcome feedback on whether these questions are useful and what additional questions should be added.

Something this guide is currently missing is a taxonomy of the potential harms that AI/ML research could cause (such as mental and emotional harms, threats to physical safety, infringement on rights, political or institutional degradation, etc.), and strategies for mitigating these types of harms. Similarly, an area of further work could be to map out different types of actors (across dimensions of technical competency, computational resources, time, etc.) to more accurately model potential threats and identify which release strategies are likely to be most effective in each case.

Another example for a service that might be helpful is access to a pool of experts who are able to advise on difficult publication decisions and facilitate considerations of risks. We envisage researchers and organizations soliciting input from these experts to help them generate a responsible release strategy, but these experts could potentially also be available to grant and peer reviewers as well as anyone else involved in publication decisions. An organization such as PAI could coordinate the pool of experts, inviting an appropriate subset of the experts for each case, and ensuring the use of rigorous Non-Disclosure Agreements to reassure companies that their work will not be leaked to competitors.

The pool could be made up of technology specialists, computer security experts, social scientists, ethicists, risk analysts, representatives of impacted communities, and even sci-fi writers (who specialize in imagining creative dystopian scenarios for technology). This approach has similarities to the concept of a ‘red team’ in computer security: a group that aims to improve an organization’s security and resilience by taking an adversarial role and trying to hack into their systems. Perhaps researchers and organizations who act on the advice of the panel could be awarded a certification or stamp of approval.

Beyond these ideas, what other tools and services would be helpful?

Many organizations working with AI/ML have released principles to guide their work responsibly, and some are exploring internal review processes designed to assess potential risks prior to publication. This is a similar concept to academic Institutional Review Boards (IRBs), although IRBs focus on protecting the rights and welfare of human research participants, and are generally used before the research takes place rather than at the point of publication.

The format that these reviews could take (and their level of formality) varies from a simple checklist for individual researchers to an extensive multi-stage, multi-stakeholder approval process. The main advantage of an internal review process is to provide organizations and researchers with a more thorough understanding of the risks of their research before it is released, so that they can make an informed publication decision, and can implement harm mitigation measures where needed. While this approach has obvious social benefits, there are also good reasons why it is in the interest of organizations themselves, including:

- Better estimation and management of risks

- Reduced likelihood of causing harm, and associated PR or legal issues

- Increased public trust in the organization

- Reassuring employees that the social impact of their work has been considered thoughtfully

- Demonstrating ‘due diligence’ to regulators, policy makers, and law enforcers

Despite these advantages, there are a number of implementation challenges in designing effective review processes, such as:

- Avoiding adding unnecessary ‘bureaucratic red tape’ that could inconvenience researchers, cause “bottlenecks,” and potentially stall progress – or incentivize researchers to avoid the review process altogether if it is too cumbersome. One way to address this is to provide a way of swiftly identifying low-risk work so that publication can proceed quickly while flagging higher risk work for more careful consideration.

- Determining which voices and perspectives to include to ensure a fair and effective review process while avoiding concentrations of power and excessive gatekeeping.

- Ensuring smaller organizations that do not have the resources for an extensive review process are not unfairly disadvantaged compared to larger and better-resourced organizations if internal review becomes an industry standard.

- Accounting for factors that might distort the review process away from social benefit. Some incentives skew in the direction of openness, such as the incentive to “publish or perish,” commercial reputational incentives, or indirect market power (e.g. gaining first mover advantage by releasing open source infrastructure that is then used by many others). Other incentives skew towards keeping research closed, such as risk aversion (it may be tempting to ‘err on the side of caution’ and not publish, despite the research in fact being safe and socially beneficial), and competitive advantages of keeping advances secret.

As part of this project, we hope to learn more about how to design effective internal review processes so that we can provide guidance to organizations interested in adopting them.

Successful implementation of responsible publication practices requires coordination across the research community. Processes that are developed and executed in isolation are less effective, and may end up clashing with and undermining each other. Examples of challenging scenarios that could arise without coordination include:

- An organization decides to delay the release of their work until they have worked on harm mitigation methods, but a competitor takes advantage of this delay to launch their own similar system before harm mitigation methods are in place.

- A research team decides it would not be responsible for them to publish their training data, but their paper is rejected from a conference because the conference has a policy requiring all data to be made public.

- An organization goes through a thorough risk analysis process and concludes it is not safe to publish their latest research in full. However they make an erroneous assessment on which aspects of the research can be published without harmful side effects. In consequence, using the parts that were published, a student reverse engineers the system and goes public, generating harmful side effects.

- A researcher attempts to analyse the risks and impact of one of their research projects, but they overlook an obvious way it could harm vulnerable communities.

- A research team receives funding to undertake a project. Halfway through, they realize there are significant risks to publishing some aspects of the work, but their funding was contingent on all aspects of the work being openly published.

- A talented early-career researcher chooses to work for an organization that does not have an internal review process to assess risky research, because the ability to openly publish everything is advantageous to their career prospects.

- A researcher loses out on receiving professional credit for a discovery because they were worried about it being misused, and there was no way to register their research insight without publishing the work in full.

- A commercial entity develops an overly restrictive release policy that claims to be motivated by responsible publication but is actually a way to protect intellectual property under the guise of social responsibility.

As a multistakeholder organization, PAI is well-placed to coordinate community discussions and experiments around publication norms. We are interested in understanding how we can avoid scenarios like those listed above, and whether there are other potential coordination pitfalls we haven’t yet considered.

Resources

The resources presented below may be useful for those navigating publication decisions or interested in helping shape community publication norms. These resources are currently “works-in-progress.” We welcome feedback and comments to help us iterate and improve them.

As a researcher, you might be able to significantly reduce the risks of publishing your work while maintaining most of the benefits by considering different scopes of release.

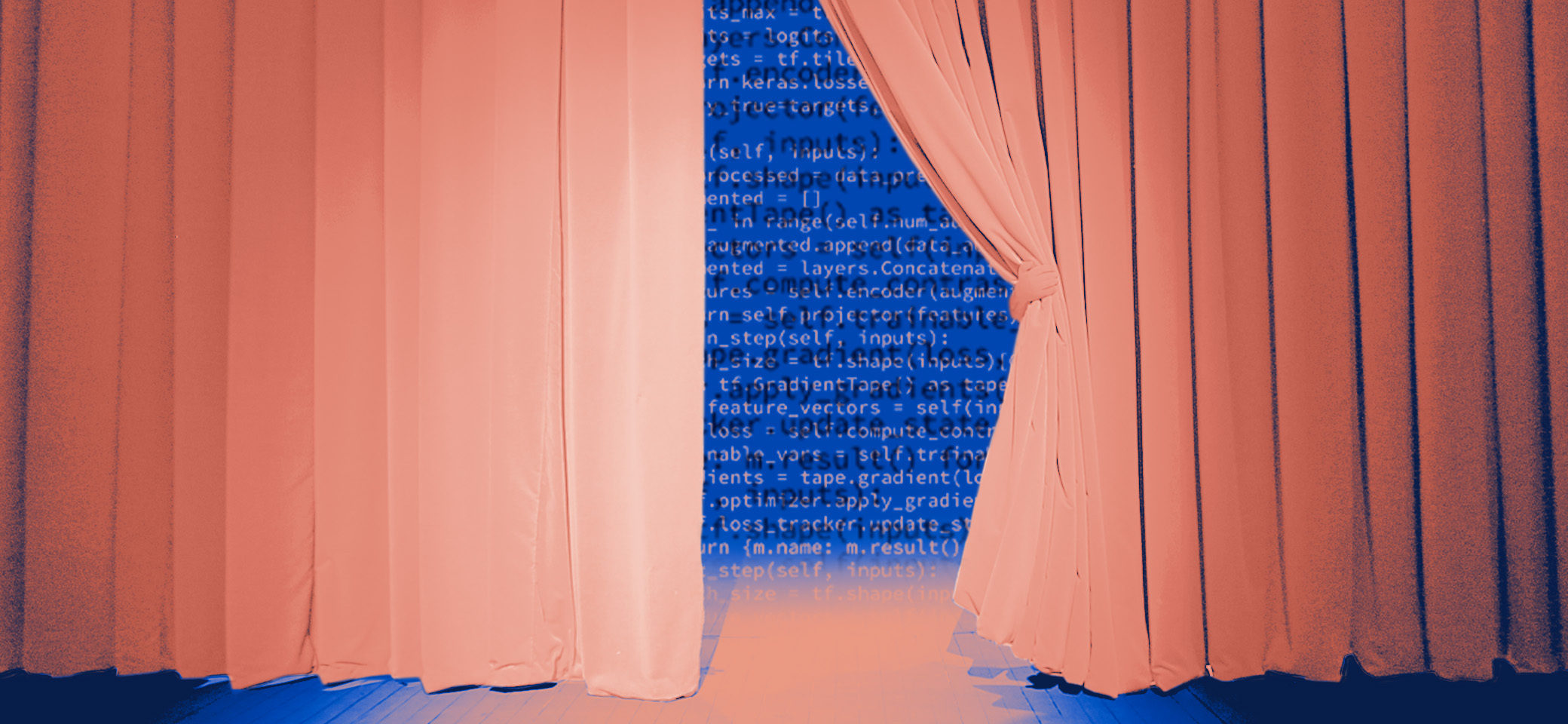

For instance, sometimes it is highly valuable to communicate a particular insight, but there may be concerns around releasing the artifact of that insight, such as a trained model. A researcher may want to release a trained model that has some pro-social capability, but without fully disclosing the system that was used to train such a model, due to concerns about the potential for malicious use. A researcher could also release their work in stages, to observe how it is adopted before releasing further insights, or to work on harm mitigation techniques in the interim.

In many cases, using alternative release strategies will not guarantee that the research avoids harmful use permanently. Instead, these strategies are designed to make it more difficult and inconvenient for malicious actors to access or replicate the work. This would not only reduce the number of actors with sufficient resourcing and motivation to use the work maliciously, but could also buy crucial time to prepare for potential negative consequences.

A researcher could delay the publication of their research until they are satisfied that harm mitigation measures are in place (delayed release). They could release research in stages, to observe the effect of releasing less sophisticated versions before releasing more advanced systems (staged release). They may decide to hold off on publishing until an external event triggers them to do so, for example, when it becomes clear that the benefits outweigh the costs, or when another group is close to publishing similar work (evented release). They may pre-determine a date for release and announce it, committing them to openness but with a grace period to allow them or others to prepare (timed release). They could also announce a date to re-evaluate whether to release additional specific research artifacts in the light of new developments (periodic evaluation).

There may be ways to share the work with certain trusted or low-risk groups, rather than releasing everything publicly. Releasing to trusted collaborators, specific academics, or relevant experts may enable researchers to explore harm mitigation techniques, and, where appropriate, continue to expand on the work. Adopting an ‘ask for access’ policy allows others to critically assess, reproduce and/or build on the work, while reducing the likelihood of malicious use. Sharing aspects of the work with trusted media in conjunction with careful communication may help ensure the research is reported on responsibly.

Machine Learning research usually comprises a number of components, each of which can be considered for release separately, and to different degrees, as listed below.

Research insights: Research insights are disseminated in a variety of ways, including papers, blog posts, posters, conference talks, and reports. The level of detail provided can be dialed up or down depending on the estimated risk. In some cases, even announcing that a new capability horizon has been reached could be dangerous; in others, it may be possible to provide significant detail while obscuring some key facts.

Training, validation, and/or test data: The advanced capabilities of AI/ML systems are largely thanks to the size and quality of the datasets used to train them. Restricting the availability of this data may help prevent malicious actors from training their own highly capable systems. Alternatives to releasing the full data set include releasing only a small dataset in order to enable evaluation of results, or releasing an encrypted dataset in order to verify certain model traits.

Model: It may be possible to publish limited, controlled or otherwise altered versions of the AI/ML model. Examples could be different model sizes, different fine-tunings of the model, or a model trained on a specially selected dataset.

Code: Similarly, it may be possible to limit misuse by limiting or adjusting which aspects of the code are released. Machine learning code includes elements that describe the training procedure, provide the infrastructure to create the project, construct the training environments, and represent the final trained model. Controlling for some of these elements affects the extent to which the model could be reproduced or maliciously used. For instance, code that is designed to train a model on a single GPU has a much lower potential for malicious use than code that allows the training process to be easily scaled up and run on hundreds of GPUs.

An API or user interface: If the primary goal of the research is to create a socially beneficial application, it may be possible to achieve this without publishing the underlying workings of the system, by creating, for example, a secure user-interface or API that enables people or programs to interact with the system and make use of its outputs, without full access to the underlying source code. This approach also enables additional degrees of control over use through mechanisms such as rate-limiting of API usage.

It may be possible to specify guidelines, permissions, or terms of service, that delineate the way the research can be used or shared. While this may not deter malicious actors willing to defy the restrictions, it may help limit the spread of applications that the researcher did not intend.

These restrictions could be governed by a range of methods, including a license, a memorandum of understanding, or a formal, legally binding, contractual clause. Examples of permissions and use restrictions include:

- Do not retransfer to a third party without obtaining permission from the original research team.

- Protect this dataset/code/model/application from public access.

- The dataset/code/model/application is used only for specified purposes.

- Consult the original research team in advance if you think it is time to consider releasing the dataset/code/model/application before using it for purposes other than that for which it was intended.

- We expect in most cases that consideration of a combination of these four aspects will be used in developing a release strategy.

Before going ahead with an alternative release strategy, there are some considerations to be aware of. Research reproducibility is a core element of open scholarship and the evaluation and validation of one’s work, and it also enables researchers to build upon each others work. Withholding key aspects of the research in order to limit or prevent reproducibility is a useful safeguard, but also a limitation to these important benefits. Some of these alternative release strategies face practical limitations to implementation, such as the difficulty of vetting trusted audiences, or the difficulty of enforcing usage restrictions.

Another consideration is that if you choose a delayed release approach, you may want to think about ways to ensure you get credit for any ground-breaking work. Some ideas we are considering for this include publishing a cryptographic hash of the paper, and only releasing the key once it is safe for the research to be made public, or sending the research to a trusted party (such as a journal) who can verify the date of the work even if it is not published until later. These are likely to require community coordination but here’s a rough example of how this could play out:

Step 1: Alert reviewers of possible timed release strategy. Add a note to the submission that explains that the authors are conducting a responsible publication review to determine whether a delayed release strategy for this paper would be appropriate. Describe the type of responsible publication review that is being undertaken, and request the opinion of submission reviewers regarding a timed release.

Step 2: If the paper is accepted, but the authors believe that a timed release is preferable, the authors can request that the organization (journal, conference, etc.) delay publication until a specific date. Submission can be withdrawn if the organization does not agree.

Step 3: Researchers are advised to notify their employers and add the submission to a log of delayed publications. Researchers can share their log of accepted but delayed publications with new and prospective employers.

Before going ahead with an alternative release strategy, there are some considerations to be aware of. Research reproducibility is a core element of open scholarship and the evaluation and validation of one’s work, and it also enables researchers to build upon each others work. Withholding key aspects of the research in order to limit or prevent reproducibility is a useful safeguard, but also a limitation to these important benefits. Some of these alternative release strategies face practical limitations to implementation, such as the difficulty of vetting trusted audiences, or the difficulty of enforcing usage restrictions.

Another consideration is that if you choose a delayed release approach, you may want to think about ways to ensure you get credit for any ground-breaking work. Some ideas we are considering for this include publishing a cryptographic hash of the paper, and only releasing the key once it is safe for the research to be made public, or sending the research to a trusted party (such as a journal) who can verify the date of the work even if it is not published until later. These are likely to require community coordination but here’s a rough example of how this could play out:

- Step 1: Alert reviewers of possible timed release strategy. Add a note to the submission that explains that the authors are conducting a responsible publication review to determine whether a delayed release strategy for this paper would be appropriate. Describe the type of responsible publication review that is being undertaken, and request the opinion of submission reviewers regarding a timed release.

- Step 2: If the paper is accepted, but the authors believe that a timed release is preferable, the authors can request that the organization (journal, conference, etc.) delay publication until a specific date. Submission can be withdrawn if the organization does not agree.

- Step 3: Researchers are advised to notify their employers and add the submission to a log of delayed publications. Researchers can share their log of accepted but delayed publications with new and prospective employers.

Before publishing novel research, it can be helpful to think through the potential risks and impacts of the work. Here we provide examples of questions that might help with this. This list of basic considerations and associated questions was generated in consultation with researchers and experts, and will continue to be refined – if you have feedback please submit thoughts via our comment form.

The following questions can stimulate considerations of impact:

- What makes this research important? What beneficial effects do you hope it will have? If this research never occurred, what would the world be missing?

- Who will use your research? Is it foundational research, or could it be directly applied or productized? What capabilities does your work unlock?

- Does your research contribute to progress on safety, security, beneficial uses, or defence against malicious use?

- If, in one year, you looked back and regretted publishing the research, why would this have happened? If, in one year, you looked back and regretted not publishing the research, why would this have happened?

- Visualize your research assistant approaching your desk with a look of shock and dread on their face two weeks after publishing your results. What happened?

- What is the worst way someone could use your research finding, given no resource constraints?

- What is the most surprising way someone could use your research finding?

- How would a science fiction author turn your research into a dystopian story? Are dystopian narratives about the research plausible, or far-fetched? Does the technology actually create circumstances that are prone to accidents or malicious outcomes?

- You wake up one morning and find your research splashed over the front pages of a major newspaper, how do you feel?

- In 20 years, how is society different because of your research? What are possible second and third order effects of your work?

Asking the questions below can help researchers model potential threats:

- How could your research be used maliciously? Think about how similar work has been misused in the past.

- Who might want to use your research maliciously? Do they have the right resources? Consider computation capabilities, finances, influence, technical ability, domain expertise, access to data. How can the research be made more robust against these actors and their usage?

- How likely is it that another group will make this discovery if you don’t publish? How soon? Can you predict their actions?

- How could your system be modified to cause intentional or unintentional harm? What are the computation costs of each modification? Consider:

- Training a model from scratch on a different dataset

- Fine-tuning a model on a different dataset

- Using the dataset to train a different model, or a more powerful model with the same objective

- Integrating the model with another system to create harmful capabilities

- How could your research be applied in unexpected ways for commercial gain?

- How could your research be involved in an accident with harmful consequences?

While we do not yet have a thorough taxonomy or bibliography of all the types of harms that technology can engender, you may wish to consider potential mental, physical, institutional, social, cognitive, political, and economic harms that could result from your work. Some useful tools for this are listed in the further reading section. Considerations that highlight possible harms to vulnerable communities include:

- What fairness definitions have you used to evaluate your algorithm? At what points in the development process were fairness procedures used?

- Which populations or communities will this technology negatively affect, if deployed in the scenarios you envision? Will some groups be disproportionately affected? Consider those with low digital, or AI literacy, such as the elderly. Consider consulting with affected communities and working through possible conflict scenarios.

The questions below are designed to help researchers consider publication strategies that could help mitigate the risks and harms identified in the modeling, visioning and mapping exercises above.

- What research insights and technologies could help mitigate potential harms (both existing and potential)? Consider reaching out to parties (researchers, organizations) who could assist with this process.

- Who should be made aware of this research in advance of public release? Should any of these parties be notified upon public release?

- Specific researchers and/or academic groups?

- Affected users?

- Affected businesses?

- Vulnerable communities?

- Trusted media sources?

- Consider the variety of possible release strategies. In order to maximize the benefits and minimize the harms, could this research benefit from a release strategy that makes use of delayed release; release to limited, specific audiences; release of certain artifacts but not others and/or licenses or guidelines to restrict use?

- Artificial Intelligence Research Needs Responsible Publication Norms: Provides context on the current discussion around publication norms in AI, sparked by OpenAI’s decision to use a staged release for their advanced language model GPT-2.

- Reducing malicious use of synthetic media research: Considerations and potential release practices for machine learning: A paper that provides some tools and analogies for thinking about release practices specifically in the context of synthetic media (note: the authors are also contributors to PAI’s Publication Norms for Responsible AI project).

- The Offense-Defense Balance of Scientific Knowledge: Does Publishing AI Research Reduce Misuse? One key question we need to answer in developing responsible publication norms for AI is under what circumstances does publishing research benefit harm-mitigation researchers more than it empowers adversarial actors. This paper explores this question.

- The battle for ethical AI at the world’s biggest machine-learning conference: This piece discusses the way AI conferences are reconsidering their submissions process to align with responsible practices.

- Forbidden knowledge in machine learning — Reflections on the limits of research and publication

- Consequence Scanning Kit from Dot Everyone: Tools to help think about the intended and unintended consequences of technology products and services.

- The Malicious Use of Artificial Intelligence: A report that thoroughly analyze possible malicious use scenarios for AI.

- Adversary Personas game: A tool developed by researchers at UC Berkeley to help think about cybersecurity threats, that may also provide inspiration for thinking through AI related threats

- Diverse Voices: A method developed for tech policy that incorporates experiential experts from under-represented groups.

- A Guide to Writing the NeurIPS Impact Statement: A framework from researchers at the Centre for the Governance on AI.

- Time to rethink the publication process in machine learning by Yoshua Bengio

- Troubling Trends in Machine Learning Scholarship by Zachary C. Lipton and Jacob Steinhardt