Overview

ABOUT ML (Annotation and Benchmarking on Understanding and Transparency of Machine Learning Lifecycles) is a multi-year, multi-stakeholder initiative led by PAI. This initiative aims to bring together a diverse range of perspectives to develop, test, and implement machine learning system documentation practices at scale.

The initiative is an ongoing, iterative process designed to co-evolve with the rapidly advancing field of AI development and deployment. In recognition that documentation is both an artifact and a process, ABOUT ML is structured into an artifact workstream and a process workstream.

Read the ABOUT ML Reference Document here.

Research

Get Involved

Start Here

Contribute to future work

Our goal for 2021 is to design testable pilots in a multi stakeholder manner. To make this process more tractable, we’ve broken this into two different workstreams. Each button below leads to a key subtask in this process, and we invite you to share your thoughts, comments, and feedback on any that you are interested in.

Process workstream

Help us solve the challenge of how documentation can be created at scale within an organization.

Artifact workstream

Join the debate on what information stakeholders deserve to know about ML systems, and how that information should be presented.

Future Work

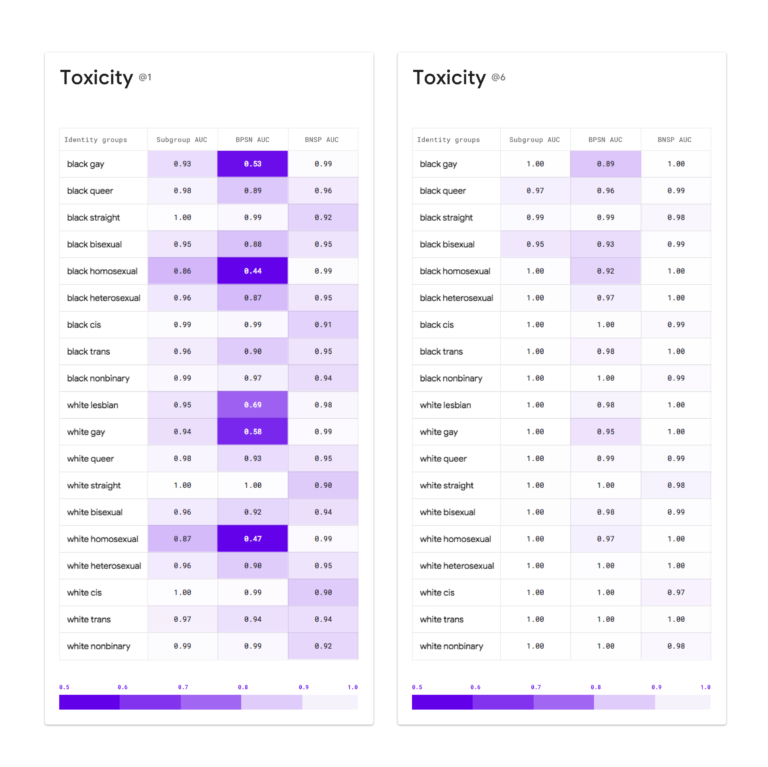

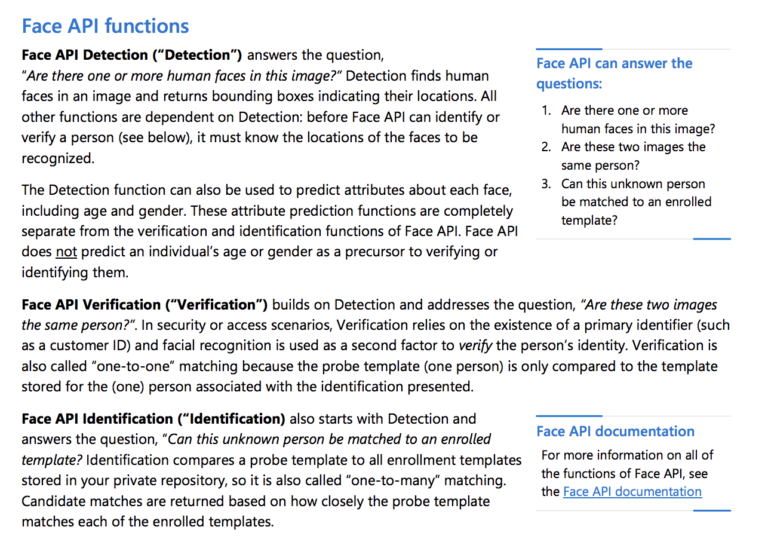

Deployed Examples of ML Documentation

See real-world deployed examples of ML documentation which can focus on datasets, models, and ML systems. Provide your feedback on these examples as part of ABOUT ML’s public feedback comment process.

To guide ABOUT ML, let the steering committee know what you think of these examples. Which questions are useful? What questions are these examples missing? Is there anything about the format of one of these examples that is effective? Leave a comment with the comment button on the left.

Advisors

The ABOUT ML group of advisors and experts is comprised of experts, researchers and practitioners recruited from a diverse set of PAI Partner organizations. We continue to provide meaningful updates and invitations for them to participate in the work. We are grateful for their contributions to this community work enabling responsible AI by increasing transparency and accountability with machine learning system documentation.

Himani Agrawal

Data Scientist

AT&T Research Labs

Norberto Andrade

Privacy & Public Policy Manager

Facebook

Amir Banifatemi

General Manager, Innovation & Growth

XPRIZE

Rachel Bellamy

Principal Researcher & Manager

Human-AI Collaboration IBM

Umang Bhatt

Student Fellow

Leverhulme Centre for The Future of Intelligence

Diane Chang

Distinguished Data Scientist

Intuit

Jacomo Corbo

Chief Scientist

Quantumblack

Daniel First

Associate/Data Scientist

Mckinsey/Quantumblack

Ben Garfinkel

Research Fellow

Future of Humanity Institute

Jeremy Gillula

Tech Projects Director

EFF

Jerremy Holland

Director of AI Research

Apple

Sara Jordan

Senior Counsel, AI & Ethics

Future of Privacy Forum

Joohyung Lee

Corporate VP/Head of Lab

Samsung

Brenda Leong

Senior Counsel & Director

of Strategy

Future of Privacy Forum

Tyler Liechty

Data Engineer

Deepmind

Lassana Magassa

Graduate Research Associate

Tech Policy Lab/University of Washington

Meg Mitchell

AI/ML Researcher

Amanda Navarro

Managing Director

PolicyLink

Sinead O’Brien

AI/ML Engagement Lead

BBC

Irina Raicu

Director, Internet Ethics Program

Markkula Center for Applied Ethics

Deborah Raji

Fellow

Mozilla Foundation

Becca Ricks

Researcher

Mozilla Foundation

Andrew Selbst

Postdoctoral Scholar

Data & Society

Ramya Sethuraman

Product Manager

Facebook

Moninder Singh

Research Staff Member

IBM

Spandana Singh

Policy Analyst

Open Technology Institute

Amber Sinha

Senior Programme Manager

Centre for Internet & Society

Michael Spranger

Senior Research Scientist

AI

Collaboration Office Sony

Andrew Strait

Associate Director of Research Partnerships

ADA Lovelace Institute

Hanna Wallach

Senior Principal Researcher

Microsoft

Adrian Weller

Senior Research Fellow

Leverhulme Center for the Future of Intelligence

Abigail Wen

Author & Filmmaker

Alexander Wong

Co-Director

Vision & Image Processing(VIP) Research Group(University of Waterloo)

Jennifer Wortman Vaughan

Senior Principal Researcher

Microsoft

Andrew Zaldivar

Senior Developer Advocate

Google

We also consult with many of our other Partners.

Steering Committee

Michael Hind

Distinguished Research Staff Member

IBM Research

Meg Mitchell

Research and Chief Ethics Scientist

Hugging Face

Kush R Varshney

Distinguished Research Scientist and Manager

IBM Research

Hanna Wallach

Partner Research Manager

Microsoft Research

Jennifer Wortman Vaughan

Senior Principal Researcher

Microsoft

Origin Story

As you explore ABOUT ML, we invite you to learn more about this work’s origins and the amazing researchers who helped from the very beginning.

Notably, Hanna Wallach, Meg Mitchell, Jenn Wortman Vaughan, Timnit Gebru, Lassana Magassa, and Jingying Yang were instrumental in the foundations of the work and we thank them for their significant contributions. Francesa Rossi and Kush Varshney, both from IBM, and Eric Horvitz at Microsoft were also key contributors in making this work possible. Please read more about the origins of ABOUT ML and contributors to the project below.